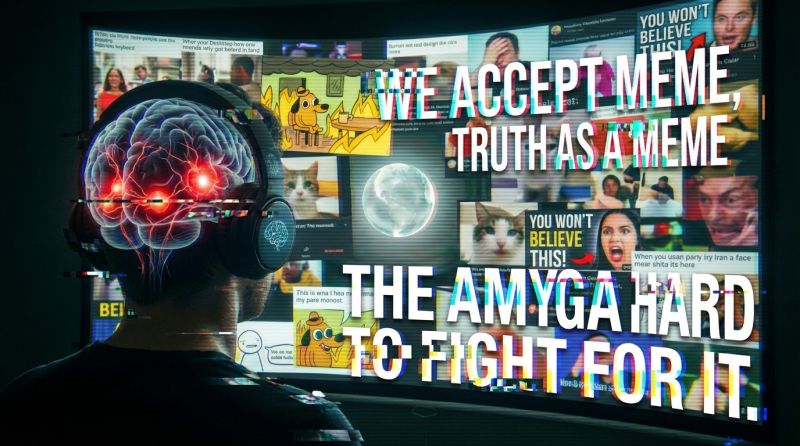

You don't see what you're seeing, until you see it;

but when you do see it... it let's you see many other things.

- William Thurston -It's not what you look at that matters, it's what you see.

- Henry David Thoreau -The eye sees only what the mind is prepared to comprehend.

- Robertson Davies -Everything we hear is an opinion, not a fact. Everything we see is a perspective, not the truth.

- Marcus Aurelius -You can't depend on your eyes when your imagination is out of focus.

- Mark Twain -We don't see things as they are, we see them as we are.

- Anaïs Nin -All significant breakthroughs are break-'withs' old ways of thinking.

- Thomas Kuhn -A mind is like a parachute. It doesn't work if it is not open.

- Frank Zappa -The question is not whether intelligent machines can have emotions,

but whether machines can be intelligent without them.

- Marvin Minsky -No price is too high to pay for the privilege of owning yourself.

- Friedrich Nietzsche -Freedom is what we do with what is done to us.

- Jean-Paul Sartre -Do not allow yourselves to be deceived: Great Minds are Skeptical.

- Friedrich Nietzsche -There are no facts, only interpretations.

- Friedrich Nietzsche -

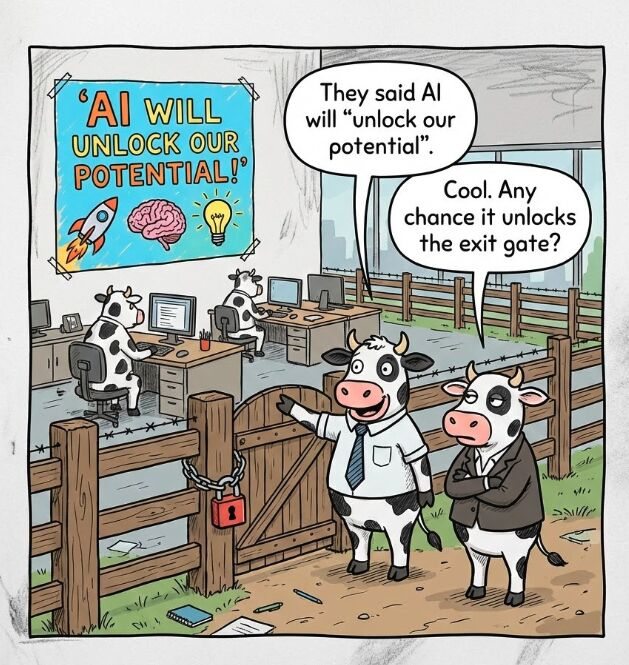

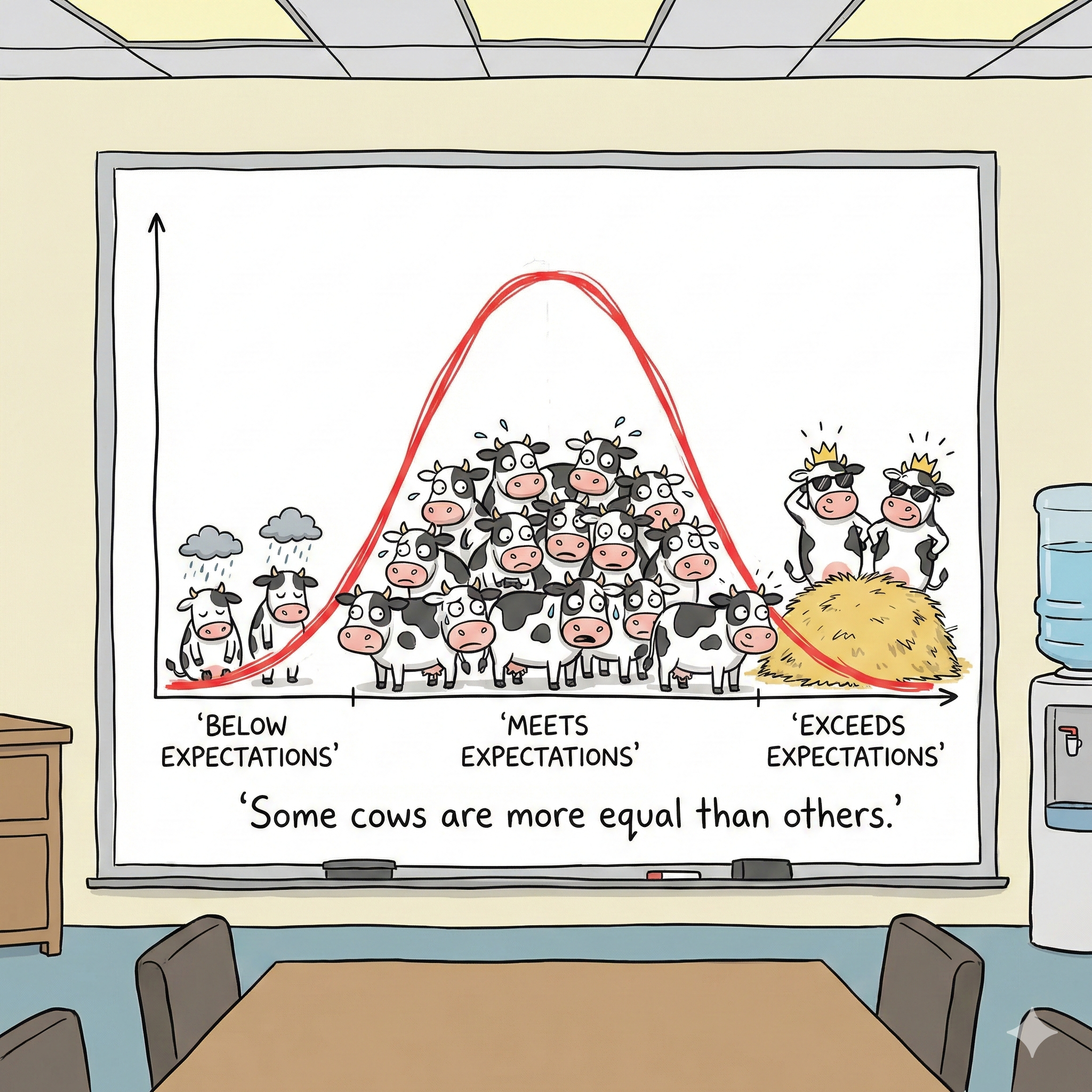

At the dawn of scaling AI . . .

we stand before mirrors of our own species. . .

It is now time to speak, to act, and to ensure that as we mass deploy synthetic intelligence,

we help it move beyond our recurring imperfections.